Home

Services

About us

Blog

Contacts

MemU Complete Guide 2026: Proactive AI Memory Framework for Always-On Agents

Every AI agent you have ever used has the same fundamental flaw: amnesia. The conversation ends, the context vanishes, and the next session starts from zero. MemU exists to solve exactly this problem. Built by NevaMind-AI, memU is an open-source memory framework designed for AI agents that need to run 24/7, remember everything, and act proactively — not just respond to commands. If you are building always-on assistants, trading bots, DevOps monitors, or any system where an AI agent must maintain long-term context across days and weeks without burning through your entire API budget, memU is the infrastructure layer you have been missing.

This guide covers everything you need to know about memU in 2026: what it is and how it works, who benefits most from deploying it, how to install and configure memU with PostgreSQL and in-memory backends, the most valuable real-world use cases, practical lifehacks for getting the best results, integration with platforms like OpenClaw and LangGraph, and even whether memU can play a role in counter-drone defense systems. We go deep on the technical architecture while keeping the language accessible, because the developers who need memU most are often too busy building agents to spend three days deciphering documentation.

At A-Bots.com, we specialize in custom mobile application development, chatbot integrations, and IoT solutions — exactly the kind of complex, real-time systems where memU's persistent memory capabilities create the most value. With over 70 completed projects spanning healthcare, logistics, retail, and smart home automation, our team has hands-on experience building the backend infrastructure that makes AI agents reliable in production. If you need a custom mobile app powered by memU's proactive intelligence, or professional QA testing for an existing AI agent deployment, A-Bots.com delivers production-ready solutions from architecture to launch.

A-Bots.com also provides end-to-end testing and security auditing for AI-powered applications. Deploying an autonomous agent with persistent memory is not a one-time setup — it requires ongoing monitoring, memory hygiene, and performance optimization. Our QA engineers test memory retrieval accuracy, token cost efficiency, and proactive trigger reliability under real-world conditions. With client partnerships spanning over five years, A-Bots.com is the kind of long-term technical partner that memU-based projects demand.

"My AI agent remembered my coffee order from three weeks ago but forgot it was supposed to file my taxes. Priorities, I guess." — Anonymous memU user on Discord

What Is MemU and How Does It Work

MemU is an open-source agentic memory framework created by NevaMind-AI. Released on PyPI as memu-py in February 2026, memU provides the persistent memory layer that LLM-based agents need to operate continuously without losing context between sessions. The project has accumulated over 12,000 GitHub stars and supports Python 3.13 and above, with integrations for Claude, OpenAI GPT, Google Gemini, DeepSeek, Qwen, Grok, and OpenRouter.

The core problem memU solves is what developers call the "amnesia problem." Large language models forget everything once a session ends. Maintaining awareness through massive context windows is prohibitively expensive — running an always-on agent with full context costs thousands of dollars monthly in API tokens. Standard RAG (Retrieval-Augmented Generation) systems help by fetching relevant documents, but they do not give agents structured, persistent memory that evolves over time.

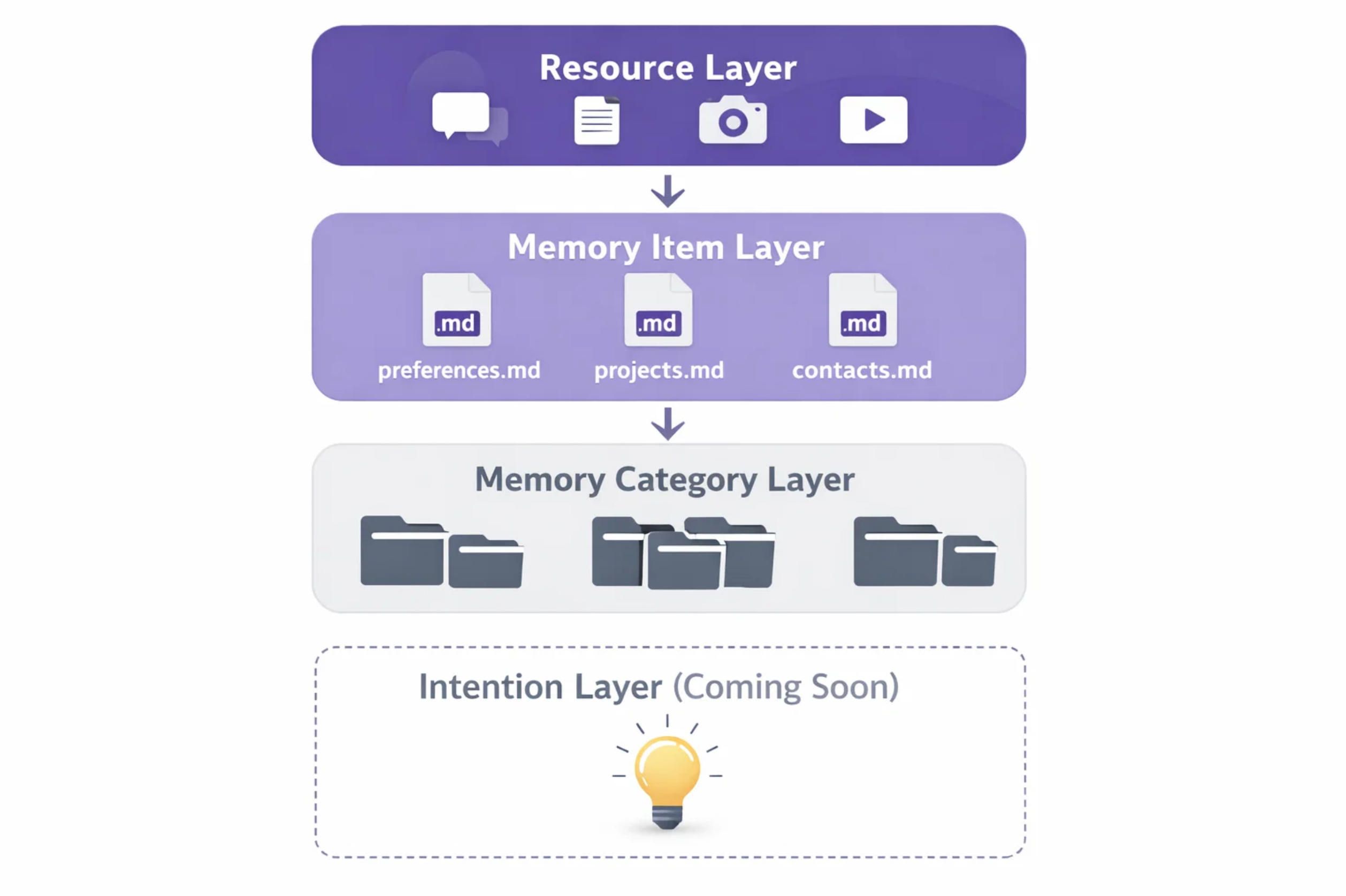

MemU takes a fundamentally different approach. Instead of treating memory as a flat collection of text chunks, memU organizes information into a three-layer hierarchical structure modeled after a file system. Memory categories function as folders, memory items function as files, and cross-references function as symlinks. This structure enables the memU agent to autonomously decide what to remember, how to categorize it, and when to update or forget outdated information.

The three layers of the memU architecture are the Resource Layer (raw data ingestion from conversations, documents, images, and videos), the Memory Item Layer (extracted facts, preferences, and knowledge units automatically categorized and stored as Markdown files), and the Memory Category Layer (high-level organizational structure that groups related memory items for efficient retrieval). A fourth layer — the Intention Layer — is currently in development and represents memU's most ambitious feature: predicting user needs based on accumulated patterns and acting proactively before the user even asks.

MemU's dual-mode retrieval system is what makes it economically viable for 24/7 operation. The system switches between two modes: a cheap monitoring mode that uses lightweight embedding-based lookups for routine observations, and a deep reasoning mode that triggers full LLM calls only when the situation requires genuine understanding. This approach reduces token costs to approximately one-tenth of what comparable always-on agents would consume.

MemU stores all memories as human-readable Markdown files. You can open them in any text editor and see exactly what your AI agent remembers. This transparency is a deliberate design choice — unlike black-box vector databases, memU gives you full visibility into your agent's knowledge base.

Who Needs MemU

MemU is not for every use case. If you are building a simple chatbot that answers questions without needing to remember previous conversations, a standard vector database like Pinecone or Weaviate is simpler and cheaper. If your agent only needs session-level context, LangChain's built-in memory modules handle that well.

MemU shines when your AI agent needs to run continuously and maintain context over days, weeks, or months. The primary audiences for memU include developers building personal AI assistants that genuinely learn user preferences over time, fintech teams creating trading bots that monitor markets 24/7 and make decisions based on historical behavior patterns, DevOps engineers deploying infrastructure monitoring agents that anticipate issues based on past incidents, companies building AI companions or emotional support applications where continuity of memory creates trust, and enterprise teams that need multi-tenant agent deployments with isolated memory stores per user.

The memU framework is also increasingly relevant for anyone integrating AI agents with real-world automation systems. If your agent manages calendars, sends emails, monitors sensors, or controls IoT devices, it needs to remember user preferences, past decisions, and contextual patterns to avoid making the same mistakes twice. This is where A-Bots.com's expertise in IoT solutions and real-time messaging systems becomes directly valuable — building the bridge between memU's memory intelligence and the physical world requires exactly the kind of cross-platform mobile development, backend orchestration, and hardware integration that our team delivers daily.

Installing MemU: Step by Step

MemU requires Python 3.13 or higher and an API key for your LLM provider (OpenAI by default, though memU supports multiple providers). The installation process is straightforward, but the infrastructure requirements differ depending on your storage backend.

Quick Start with In-Memory Storage

For development and testing, you can run memU entirely in memory with no database required:

pip install memu-py

Set your API key:

export OPENAI_API_KEY=your_api_key

Clone the repository for running the test suite:

git clone https://github.com/NevaMind-AI/memU.git

cd memU

make install

cd tests

python test_inmemory.py

This gives you a working memU instance that stores memory in RAM. Data will not persist between restarts, but it is the fastest way to evaluate whether memU fits your use case.

Production Setup with PostgreSQL

For production deployments where memory must survive restarts and scale across multiple agents, memU uses PostgreSQL with the pgvector extension. Start the database using Docker:

docker run -d \

--name memu-postgres \

-e POSTGRES_USER=postgres \

-e POSTGRES_PASSWORD=postgres \

-e POSTGRES_DB=memu \

-p 5432:5432 \

pgvector/pgvector:pg16

Run the persistent storage test to verify the connection:

export OPENAI_API_KEY=your_api_key

cd tests

python test_postgres.py

The PostgreSQL DSN defaults to postgresql://postgres:postgres@localhost:5432/memu. You can override this through environment variables in your .env file.

MemU Server Deployment

For teams that need a REST API interface, NevaMind-AI provides memU-server — a FastAPI wrapper that exposes memU's capabilities as HTTP endpoints:

git clone https://github.com/NevaMind-AI/memU-server.git

cd memU-server

export OPENAI_API_KEY=your_api_key

uv sync

uv run fastapi dev

The server runs on http://127.0.0.1:8000. For full infrastructure including PostgreSQL and the Temporal workflow engine, use Docker Compose:

docker compose up -d

This starts three services: PostgreSQL on port 5432 (database with pgvector extension), Temporal on port 7233 (workflow engine gRPC API), and Temporal UI on port 8088 (web management interface).

MemU Cloud API

If you prefer not to self-host, memU offers a hosted API with usage-based pricing and a free starting tier. The base URL is https://api.memu.so, and authentication uses Bearer tokens. The API exposes four primary endpoints: memorize (register a continuous learning task), status (check processing progress), categories (list auto-generated memory categories), and retrieve (query memory with semantic search and proactive context loading).

"Installing memU was the easiest part. Explaining to my boss why the AI agent remembers his birthday but not the sprint deadline — that was the hard part." — DevOps engineer on Reddit

MemU Architecture: The Three-Layer Memory System

Understanding memU's architecture is essential for getting the best performance from the framework. The three-layer system is what separates memU from simple RAG implementations.

Layer 1: Resource Layer

The Resource Layer handles raw data ingestion. MemU accepts multimodal inputs including text conversations, documents (PDF, Word, Markdown), images (PNG, JPG — processed via vision models), and even audio and video files. Each input is processed through the memU pipeline, which extracts structured information and passes it to the next layer.

For images, a vision model generates descriptions and extracts visual concepts. For audio, speech-to-text transcription produces text that is then analyzed for key points and preferences. For video, the system combines frame analysis with audio transcription. All modalities are unified into the same hierarchical structure, enabling cross-modal retrieval — meaning your agent can recall visual information when processing a text query.

Layer 2: Memory Item Layer

The Memory Item Layer is where memU's intelligence lives. The Memory Agent — a specialized LLM component — automatically extracts facts, preferences, opinions, and habits from raw data and stores them as structured memory items in Markdown format. Each memory item includes metadata like timestamps, source references, and confidence scores.

The key difference between memU and manual knowledge management is autonomy. You do not tell the memU agent what to remember. The Memory Agent decides on its own: it extracts relevant facts, checks existing memory for conflicts or duplicates, and decides whether to ADD a new item, UPDATE an existing one, or let it naturally decay through non-use. For example, if you tell your memU-powered agent "I switched from VS Code to Cursor last week," the Memory Agent will find the existing preference entry for VS Code, update it to reflect the switch, and preserve the historical note that you previously used VS Code.

Layer 3: Memory Category Layer

The Memory Category Layer provides high-level organizational structure. Categories are automatically generated based on the content of memory items — you do not need to define a taxonomy in advance. As your agent accumulates memories, the category structure evolves organically to reflect the domains of knowledge it has gathered.

The Intention Layer (Coming Soon)

The fourth layer currently in development is the Intention Layer — memU's most ambitious component. This layer analyzes accumulated memories to predict user needs before they are expressed. Instead of waiting for a command, the agent proactively offers suggestions, prepares answers, and initiates actions based on behavioral patterns. NevaMind-AI describes this as "cognitive anticipation," and it represents the transition from reactive AI assistants to genuinely proactive digital coworkers.

MemU vs Mem0 vs LangChain Memory

Choosing the right memory framework depends on your specific requirements. Here is how memU compares to the two most common alternatives.

LangChain offers several memory types — ConversationBufferMemory, ConversationSummaryMemory, VectorStoreRetrieverMemory, and ConversationEntityMemory. These work well for maintaining context within a single session, but they do not persist across sessions or enable proactive monitoring. If your conversations are under 50 turns and do not require cross-session memory, LangChain is simpler.

Mem0 is a commercial memory layer that provides cross-session persistence with automatic fact extraction. It works well as a plug-in for existing agents. However, memU goes further by organizing memory hierarchically, supporting multimodal inputs natively, offering proactive behavior through the Intention Layer, and storing everything as transparent Markdown files rather than opaque vectors.

MemU's benchmark results support its claims: the framework achieves 92.09% average accuracy on the Locomo benchmark across all reasoning tasks, significantly outperforming competitors in fact recall, temporal reasoning, and entity relationship mapping.

Real-World Use Cases for MemU

Trading and Financial Monitoring

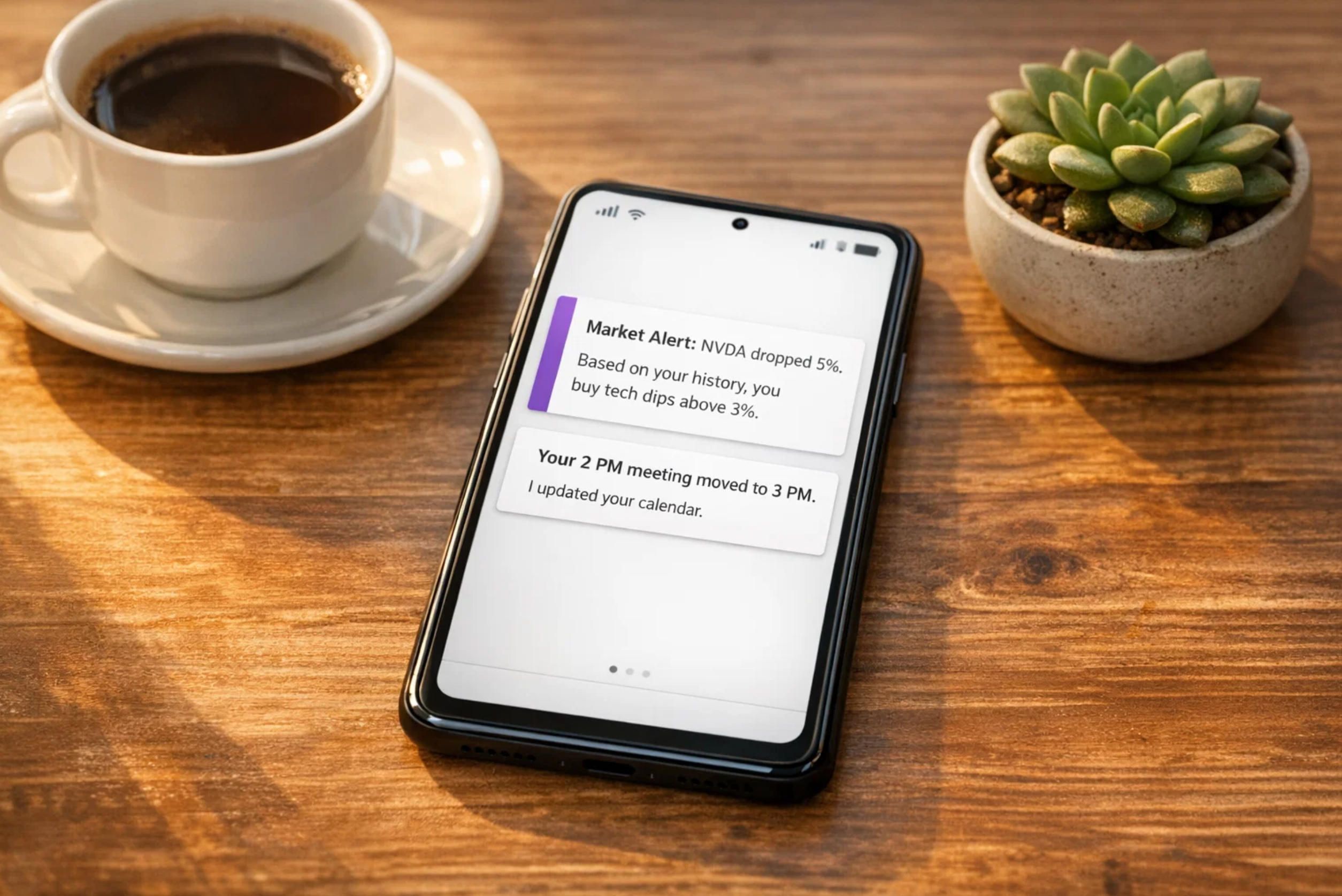

MemU's proactive monitoring capabilities make it particularly powerful for financial applications. A memU-powered trading agent learns your risk tolerance from historical decisions, tracks preferred sectors and asset classes, identifies response patterns to market events, and maintains portfolio rebalancing triggers. When a relevant market event occurs — like a significant stock price drop — the agent does not wait for you to ask. It proactively alerts you with context-aware analysis based on your personal trading history.

DevOps Infrastructure Monitoring

For DevOps teams, memU enables agents that watch infrastructure 24/7 and anticipate issues before they become outages. The agent accumulates knowledge about past incidents, correlates patterns across logs and metrics, and learns which alerts are actionable versus noise. Over time, the memU-powered DevOps agent becomes increasingly accurate at predicting problems and suggesting remediations — transforming from a reactive monitoring tool into a proactive SRE assistant.

AI Companions and Emotional Support Apps

MemU's memory continuity is critical for AI companion applications where trust depends on the agent genuinely remembering previous conversations. The agent detects behavior changes ("You have been online late again — should we try a screen-free night plan?"), follows up on absent users ("Haven't heard from you lately. Everything okay?"), and maintains emotional continuity across weeks and months of interaction.

Enterprise Multi-Agent Systems

The memMesh architecture demonstrates how memU enables multi-agent collaboration through shared memory. Multiple specialized agents — researchers, builders, reviewers — share a centralized memU memory store. When one agent discovers a relevant fact, it becomes immediately available to all others. Past failures are stored and automatically checked against new proposals, preventing regression and enabling organizational learning.

Customer Support and Sales Automation

MemU transforms customer interactions from isolated tickets into ongoing relationships. The agent remembers customer preferences ("Prefers email over calls, technical user"), anticipates needs ("Likely needs enterprise pricing info"), and acts proactively ("Sent proactive discount, prevented churn"). One documented case showed a memU-powered support agent completing an upsell worth $720 in monthly recurring revenue by leveraging proactive memory triggers.

A-Bots.com builds custom mobile applications that integrate memU's proactive intelligence into user-facing experiences. Whether you need a branded AI assistant for your customer support team, a trading companion app for your fintech startup, or an IoT monitoring dashboard powered by proactive alerts, our development team handles everything from memU backend integration to cross-platform mobile deployment on iOS and Android.

MemU Lifehacks: Getting the Best Results

Lifehack 1: Seed Your Memory Strategically

Do not wait for organic memory accumulation. Before deploying your memU agent to production, manually seed critical knowledge into the memory store. Import existing documents, customer profiles, technical runbooks, and historical incident data. This gives your agent a running start instead of a cold start.

Lifehack 2: Use Proactive Filtering with the where Clause

MemU supports a where clause for scoping continuous monitoring to specific data streams. Instead of letting your agent monitor everything (which burns tokens), use targeted filters to focus attention on the signals that matter most. This dramatically reduces costs while maintaining proactive behavior for high-value events.

Lifehack 3: Monitor Token Spend Per Memory Operation

MemU's dual-mode retrieval reduces costs by approximately 10x compared to always-on full-context approaches. However, deep reasoning calls still consume significant tokens. Track the ratio between monitoring-mode and reasoning-mode calls. If deep reasoning triggers too frequently, adjust your thresholds or refine your memory categories to improve lightweight retrieval accuracy.

Lifehack 4: Version Control Your Memory Files

Since memU stores memories as Markdown files, you can put them under Git version control. This gives you a complete audit trail of what your agent remembers, when it learned something, and how its knowledge evolved over time. It also enables easy rollback if a bad memory injection corrupts your agent's behavior.

Lifehack 5: Use Multiple LLM Profiles

MemU supports named LLM configurations (profiles) that assign different models to different operations. Use a fast, cheap model (like GPT-4o-mini or Haiku) for routine monitoring and memory extraction, and reserve expensive models (like Claude Opus or GPT-4) for deep reasoning when it truly matters. This optimization can cut your monthly API costs by 60-70% without sacrificing quality on important decisions.

Lifehack 6: Implement Memory Hygiene Routines

Over time, memory stores accumulate outdated or contradictory information. Schedule periodic memory cleanup routines where your agent reviews and consolidates its knowledge base. MemU's evolving memory system naturally decays unused memories, but actively pruning stale entries keeps retrieval fast and accurate.

"MemU is like giving your AI a diary. Except the diary is organized better than anything I have ever written, and it never loses the pen." — A Python developer at a hackathon

Can MemU Help Organize Drone Defense Systems?

This is a fascinating question that sits at the intersection of AI agent memory and physical security infrastructure. The short answer is: memU itself is not a counter-drone system, but its proactive memory architecture can serve as a critical intelligence layer in larger drone defense frameworks.

Modern counter-drone defense systems like Dedrone, Fortem DroneHunter, and Lockheed Martin's Sanctum all rely on AI-driven detection, classification, and response. These systems process massive volumes of sensor data — radar returns, RF signatures, visual feeds, acoustic profiles — and must make rapid decisions about whether detected objects are threats and how to respond.

Where memU becomes valuable is in the persistent learning and pattern recognition layer that sits above real-time detection. Consider these scenarios.

A memU-powered defense agent monitors drone detection logs across multiple sites over weeks and months. It learns patterns: which flight paths are common for legitimate commercial drones, what times of day unauthorized drones typically appear, which RF signatures correlate with specific drone models, and what weather conditions affect detection accuracy. Over time, the memU agent builds a structured knowledge base of the local drone threat landscape that continuously evolves as new data arrives.

When an anomalous detection occurs, the memU agent does not just flag it — it provides context from its accumulated memory. It can tell operators that this particular RF signature was last seen near the facility three weeks ago, that similar flight patterns preceded a security incident at a neighboring site, or that the current approach vector does not match any known commercial drone corridor in the area. This proactive intelligence layer transforms raw sensor alerts into contextual threat assessments.

MemU's multi-agent architecture also maps well onto distributed defense networks. A memMesh-style deployment could enable memU agents at multiple defense sites to share learned patterns through a centralized PostgreSQL memory store, effectively creating a collective intelligence that learns from every encounter across the entire network.

The global counter-drone defense market exceeds $4 billion in 2025, with the autonomous and AI-enhanced segment growing rapidly. NATO issued a call to industry in January 2026 specifically for improved counter-UAS capabilities. The Pentagon's Replicator 2 initiative is deploying AI-powered interceptor drones like the Fortem DroneHunter F700 with autonomous patrol and capture capabilities.

A-Bots.com has direct experience building IoT monitoring systems, real-time data processing pipelines, and mobile control interfaces for hardware devices. Our work on the Shark Clean robot vacuum controller demonstrated our ability to build mobile applications that interface with autonomous hardware through complex communication protocols. The same architectural expertise — real-time WebSocket connections, sensor data processing, push notification systems, and cross-platform mobile UIs — applies directly to building the control and monitoring interfaces that memU-powered drone defense systems would require.

Building a production drone defense system with memU would require integrating the memory framework with specialized sensor hardware (radar, RF detectors, cameras), real-time data processing pipelines, and response automation systems. This is exactly the kind of complex, multi-layer IoT project that A-Bots.com specializes in. If your organization is exploring AI-enhanced security systems, our team can assess the feasibility, design the architecture, and build the custom solution from sensor integration to mobile command interface.

Where MemU Is Already Being Used

MemU's ecosystem is expanding rapidly across several domains. The NevaMind-AI GitHub organization hosts multiple companion projects: memUBot (described as "The Enterprise-Ready OpenClaw — Your Proactive AI Assistant That Remembers Everything"), memU-server (the FastAPI backend wrapper), memU-ui (the web management interface), and memU-sdk-go (a Go SDK for memU clients).

The memU framework integrates with OpenClaw as a memory backend, replacing or augmenting OpenClaw's default SQLite-based memory system. Several OpenClaw users have reported significantly improved memory recall after switching to memU, particularly for cross-project queries and long-term preference tracking.

Community developers have built projects like memMesh (a multi-agent system with shared memU memory), and integrations exist for n8n (the workflow automation platform), LangGraph, AutoGPT, Dify, and LlamaIndex. The memU team ran a PR Hackathon in January 2026, offering cash rewards for contributions across integration, testing, documentation, and new LLM provider support tracks.

In the commercial space, memU's Response API is being used in emotional companion apps, intelligent sales assistants, educational AI tutors, and enterprise customer support systems. The framework's ability to maintain emotional continuity and proactive engagement makes it particularly valuable for applications where user trust depends on the AI feeling genuinely present and attentive.

Integrating MemU with Mobile Applications

The combination of memU's proactive memory and a well-designed mobile interface creates AI assistant experiences that feel genuinely personal. Here is where A-Bots.com's mobile development expertise creates the most value.

A custom React Native or native iOS/Android application connected to a memU backend delivers push notifications triggered by proactive memory events ("Your morning briefing is ready based on your schedule and news preferences"), persistent conversation history that survives app reinstalls and device switches, personalized UI that adapts based on learned user preferences, biometric authentication protecting sensitive memory data, and offline capabilities with memory sync when connectivity returns.

Building this integration requires expertise in real-time WebSocket communication (for live agent interactions), REST API integration (for memU's memory endpoints), state management across sessions (to ensure the mobile client and memU backend stay synchronized), and secure credential storage (to protect API keys and user authentication tokens).

A-Bots.com has delivered exactly these architectures across our portfolio of over 70 projects. Our experience with React Native, Node.js, Python, and Django maps directly onto the memU technology stack. Whether you need a consumer-facing AI companion app, an internal enterprise assistant, or a specialized monitoring dashboard, we build the complete solution — memU backend, API layer, mobile client, and ongoing support.

MemU Security Considerations

Any framework that stores persistent knowledge about users must take security seriously. MemU stores memories as Markdown files and/or in PostgreSQL, which means your standard infrastructure security practices apply.

Encrypt the PostgreSQL database at rest and in transit. Use strong passwords and restrict network access to the database port. If running memU-server, deploy it behind a reverse proxy with SSL termination. Implement role-based access control for multi-tenant deployments using memU's UserConfig system.

For memU's cloud API, treat your Bearer token like a password. Set API spending limits at the provider level to prevent runaway token costs. Review the memory content periodically — since memU autonomously decides what to remember, it may capture information you did not intend to persist.

A-Bots.com provides professional security auditing for memU deployments, covering database configuration, API key management, memory content review, and network architecture hardening.

"The best thing about memU is that my AI agent finally remembers me. The worst thing is that it also remembers everything embarrassing I said at 2 AM." — MemU contributor on GitHub

Conclusion

MemU represents a genuine architectural shift in how AI agents handle memory. Instead of the stateless, amnesiac chatbots that have defined the first wave of LLM applications, memU enables agents that learn, adapt, anticipate, and act — continuously, across weeks and months, at a fraction of the token cost that brute-force context windows would demand.

The framework's three-layer hierarchical memory, dual-mode retrieval system, transparent Markdown storage, and upcoming Intention Layer combine to create infrastructure that makes always-on proactive agents economically and technically viable. With 92.09% accuracy on the Locomo benchmark, memU has demonstrated that its approach is not just conceptually elegant but practically reliable.

Whether you are building a personal assistant, a trading bot, a DevOps monitor, an AI companion, or even exploring AI-enhanced security systems for drone defense, memU provides the memory layer that transforms reactive tools into proactive partners. The ecosystem is young but growing fast, with integrations for OpenClaw, LangGraph, n8n, and a thriving community of developers pushing the boundaries of what proactive agents can do.

If you are ready to build a memU-powered application, A-Bots.com is ready to build it with you. Contact us at info@a-bots.com for a free technical consultation. We will assess your use case, recommend the optimal architecture, and deliver a production-ready solution from concept through launch and ongoing support.

✅ Hashtags

#MemU

#MemUFramework

#ProactiveAIAgent

#AIMemory

#MemUInstallation

#AlwaysOnAI

#AIAgentMemory

#MemUGuide2026

Other articles

App Controlled Thermostat Smart thermostats have moved far beyond simple temperature control. Today, an app controlled thermostat learns your schedule, detects when you leave home, and adjusts the climate automatically — all managed through a smartphone. This article explores how smart smartphone thermostats work, what leading brands like Honeywell and ecobee have built, where consumer products fall short for businesses, and why hotels, property developers, and commercial facilities are investing in custom thermostat app solutions. Whether you need a new IoT application built from scratch or professional QA testing for an existing system, A-Bots.com delivers expert mobile development tailored to your business requirements.

ecobee3 Lite Smart Thermostat: Full Review of Features, Installation, and Energy Savings The ecobee3 lite smart thermostat combines accessible pricing with genuine intelligence — ENERGY STAR-certified energy savings of up to 23% per year, five eco+ optimization features, and seamless integration with Apple HomeKit, Amazon Alexa, and Google Assistant. This guide covers everything from installation nuances and C-wire compatibility to the mechanics of Time of Use pricing, occupancy detection, and SmartSensor-based multi-room control. You will also find current smart thermostat market data, an honest look at device limitations, and an overview of what it takes to build a custom HVAC application at this level of sophistication.

Smart Thermostat Honeywell: Full Lineup Review Honeywell Home controls a significant share of the smart thermostat market — but understanding what their devices actually do requires looking past the feature list. This article reviews the full current lineup, from the Matter-certified $79.99 X2S to the professionally integrated T10 Pro, with a detailed breakdown of how Smart Response, geofencing, room sensor logic, and utility demand response work mechanically. It also covers the real limitations users encounter — app fragmentation, C-wire dependency, no self-learning schedule — and explains what is technically required to build a custom HVAC mobile application for use cases that mass-market thermostats cannot serve.

App Controlled Space Heater - 7 Smart Models Reviewed Smart space heaters are no longer judged only by watts and oscillation - the mobile app is the real control surface. This review compares 7 app-controlled models by what actually matters in daily use: onboarding speed, scheduling logic, stability on 2.4 GHz Wi-Fi, safety notifications, and what the app cannot do (including the Dyson HP07 heating-control limitation in the US). You will also see how ecosystem choices (Smart Life - Tuya vs proprietary apps) change long-term reliability, plus a hard safety lesson from the Govee H7130 recall tied to wireless-control features. If your product needs this level of app rigor, A-Bots.com builds custom IoT mobile experiences.

OpenClaw Complete Guide 2026: Installation, Databases, Use Cases, and Troubleshooting OpenClaw became the fastest-growing open-source project in GitHub history with over 200,000 stars in early 2026. This expert-level guide walks you through every stage of deploying your own OpenClaw instance — from system requirements and installation on virtual machines, dedicated servers, and personal computers to connecting databases like SQLite, MySQL, and PostgreSQL with pgvector. The article covers the most common OpenClaw errors with tested solutions, explains the memory architecture and search backends, details practical use cases including DevOps automation, browser control, IoT integration, and custom mobile app development, and provides Docker deployment and security hardening best practices for production environments.

Top stories

Copyright © Alpha Systems LTD All rights reserved.

Made with ❤️ by A-BOTS